What Is the NIST AI Risk Management Framework?

Artificial intelligence is moving quickly from experimentation to everyday use. As AI systems influence decisions and automate processes, the question leaders face is no longer whether to use AI, but how to manage the risks that come with it. That challenge has pushed organizations to seek practical guidance on AI risk mitigation, and the NIST AI Risk Management Framework has emerged as a widely trusted reference point.

The NIST Artificial Intelligence Risk Management Framework (AI RMF) was released in 2023 by the U.S. National Institute of Standards and Technology. While its adoption is voluntary, it provides a defensible governance foundation that helps organizations get ahead of mandatory regimes rather than reacting to them. Rather than prescribing a checklist, the framework focuses on building the capabilities organizations need to govern AI responsibly across its lifecycle.

Why the NIST AI Risk Management Framework Matters

Organizations want to benefit from AI while avoiding unintended consequences related to bias, reliability, and transparency. The push for governance is no longer abstract; it’s being driven by a growing patchwork of concrete regulatory requirements. The EU AI Act, the Colorado AI Act, and NYC Local Law 144 are just a few examples of laws demanding stronger oversight and clearer accountability for AI-driven decisions.

The NIST AI risk management framework addresses this gap by providing a common structure for identifying, assessing, and managing AI risks. It helps organizations move from intention to execution by translating high-level principles into repeatable practices that stand up to scrutiny.

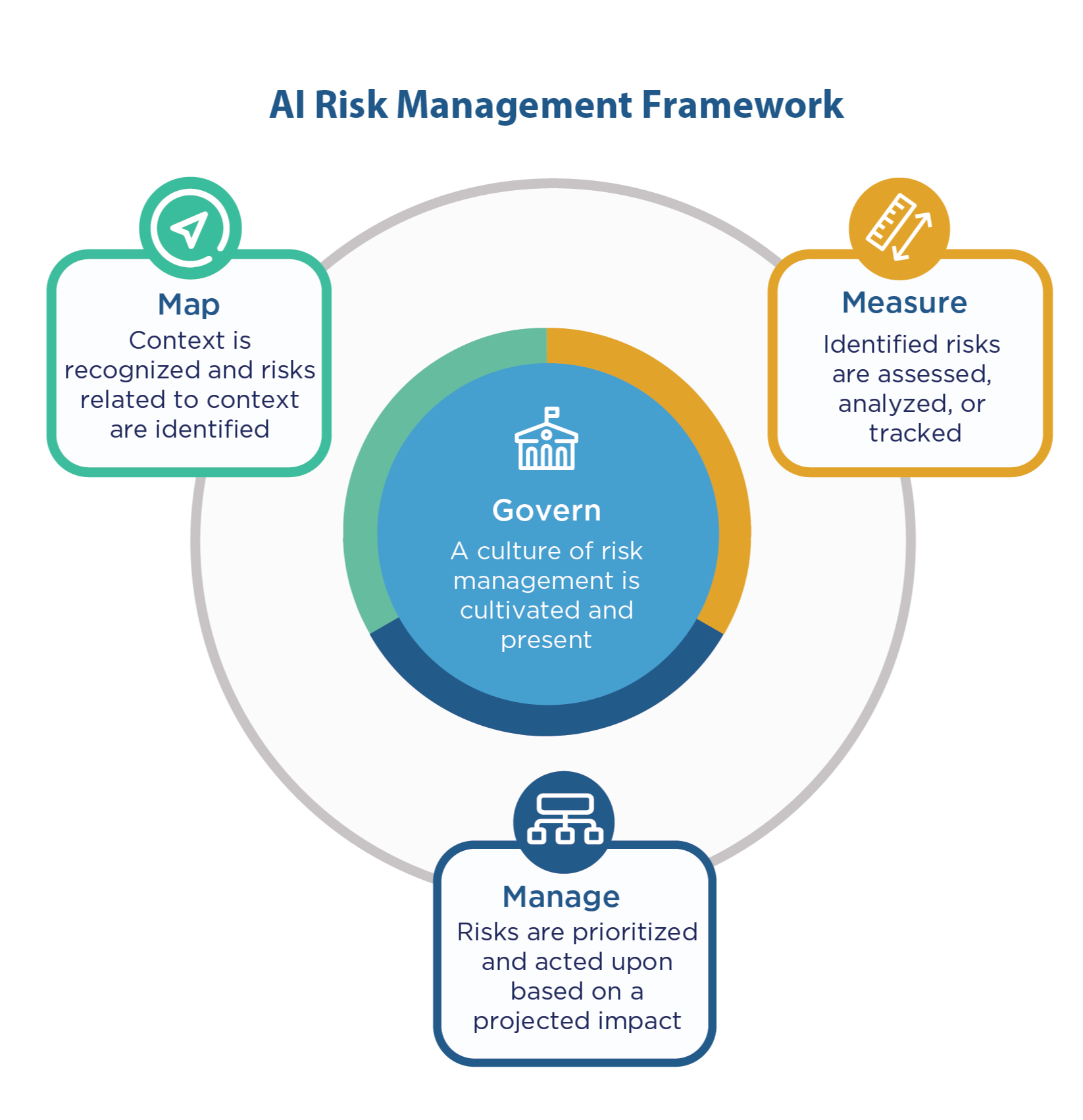

4 Core Functions of the NIST Artificial Intelligence Risk Management Framework

At its center, the NIST AI RMF outlines four functions that describe how to manage risk across the AI lifecycle. These functions are designed to be flexible and iterative, making them applicable to traditional machine learning, third-party models, and generative AI systems.

1. Govern

The Govern function establishes accountability and oversight for AI risk management. It focuses on defining clear ownership, aligning AI use with organizational values, and ensuring decision-making authority matches the organization’s risk appetite.

2. Map

The Map function emphasizes understanding context. It helps organizations identify where and how AI is used, who may be impacted, and what risks may emerge. Mapping creates the visibility needed for an informed risk assessment.

3. Measure

The Measure function addresses how AI risks are assessed, analyzed, and monitored. Measurement activities are mapped to the seven trustworthy AI characteristics the framework seeks to promote: valid and reliable; safe; secure and resilient; accountable and transparent; explainable and interpretable; privacy-enhanced; and fair with harmful bias managed. This includes evaluating model performance, bias, robustness, and security to ensure risks are identified early and tracked as systems evolve.

4. Manage

The Manage function focuses on action. It centers on prioritizing risks, implementing controls, responding to incidents, and making informed trade-offs when residual risk remains. Managing AI risk is continuous, requiring organizations to adapt as AI systems and regulatory expectations change.

Introducing Profiles and the Generative AI Profile

To make the framework more actionable for specific use cases, NIST has introduced the concept of Profiles, overlays of the core framework for a particular sector or technology. The most prominent example is the Generative AI Profile (NIST-AI-600-1), released in July 2024. As a companion to the AI RMF, it provides specific guidance for managing the unique risks of GenAI, making it the most relevant extension for organizations deploying large language models and other generative tools today.

How the NIST AI Risk Management Framework Aligns with Other Frameworks

The NIST AI RMF is designed to complement existing risk and compliance frameworks, not replace them. Many organizations already rely on the NIST Cybersecurity Framework (CSF) or internal model risk management programs. The AI RMF extends these efforts into areas such as fairness and explainability.

It also pairs naturally with certifiable management system standards. ISO 42001, for instance, provides the formal structure for an AI management system, while the AI RMF offers the framework for executing its risk management principles. Similarly, it aligns with broader security standards like ISO 27001. By mapping AI risks across frameworks, organizations can create a cohesive governance approach that scales.

Turning the NIST AI RMF into a Sustainable Governance Capability

The NIST AI Risk Management Framework is not about slowing innovation; it is about enabling organizations to scale AI with confidence. By standardizing how AI risks are identified, assessed, and managed, the framework helps teams build production-ready systems that can withstand scrutiny. As AI adoption accelerates, treating AI risk management as a core governance capability is essential for building trust and demonstrating control.

SAI360 helps organizations operationalize the NIST AI Risk Management Framework now, right alongside established standards like the NIST CSF and ISO. With integrated risk, controls, policy, and incident management, SAI360 enables a consistent, auditable approach to AI governance that scales with your organization. Schedule a demo today.

Share this article

Follow us

Table of Contents